WAY Labs Review (Features, Pricing, & Alternatives)

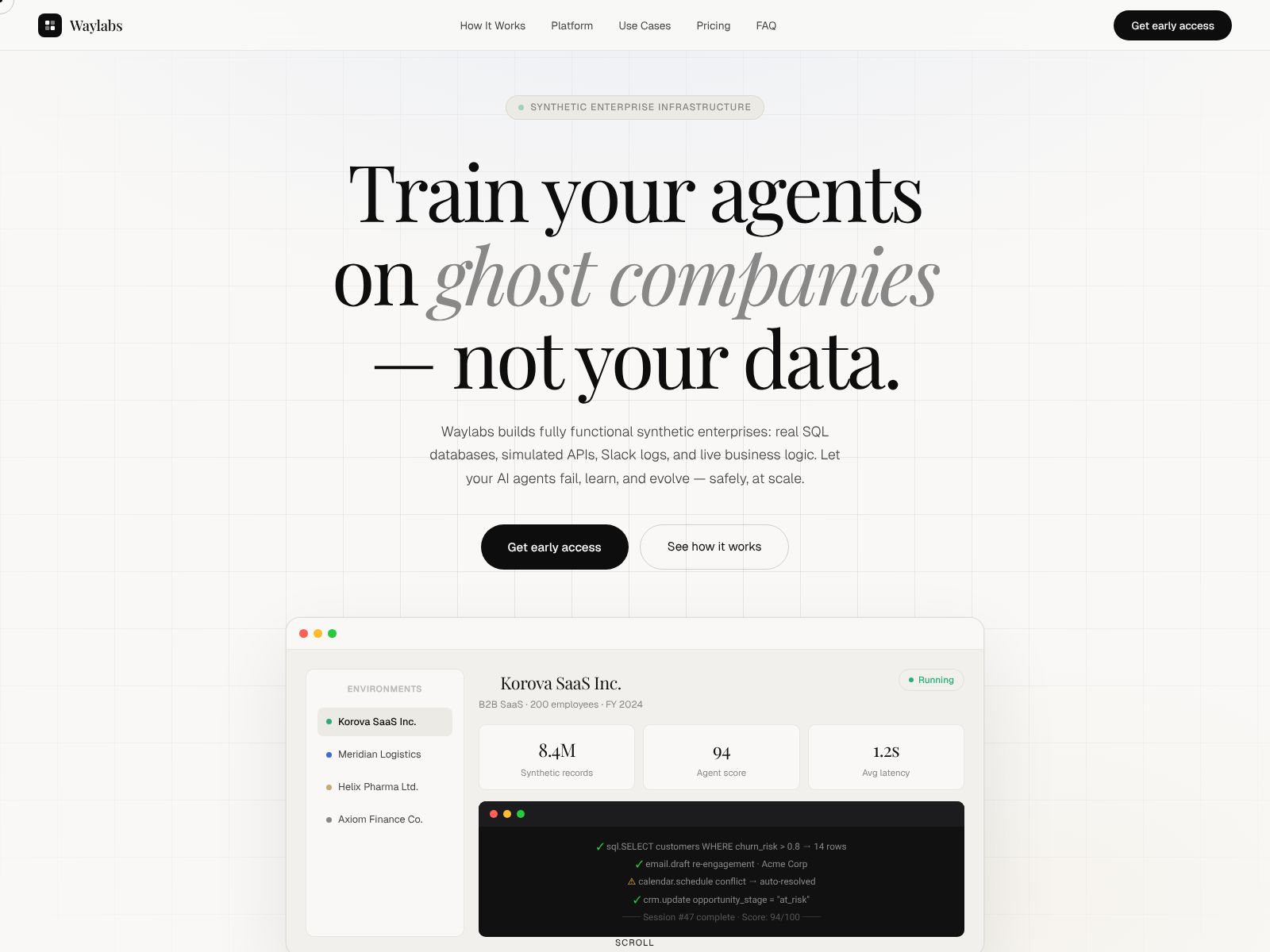

If you build or deploy AI systems, you already know the hardest work starts after your model seems to “work.” Real users do strange things, edge cases appear from nowhere, and the model’s confident answers can break when you least expect it. WAY Labs steps into this uncomfortable space with a simple promise: provide safe chaos for AI. In other words, it aims to help you safely pressure-test your models and AI applications before your customers do.

In this review, I’ll walk you through what WAY Labs is trying to solve, the kinds of features and workflows you can expect from a platform focused on “safe chaos,” the teams that benefit most, top alternatives to consider, and a practical way to evaluate tools in this category. I’ll also share a short buyer’s checklist you can use with your team. If you want to explore the company directly, you can visit WAY Labs at waylabs.org.

What does WAY Labs do?

WAY Labs helps you safely test your AI systems by putting them through unpredictable, real-world-like situations and edge cases—on purpose. Think of it as structured “chaos” that reveals where your models and agents are brittle, biased, or misleading, so you can fix those issues before your users encounter them.

Who is WAY Labs for?

- AI product teams that need to harden LLM apps, agents, or copilots before launch.

- ML engineering and platform teams that want evaluations and guardrails integrated into CI/CD.

- Safety, risk, and compliance groups that need clear evidence of testing, controls, and policies.

- Research teams that run experiments across models and configurations to probe failure modes.

- Enterprise leaders who need predictable, auditable AI behavior at scale.

Why “safe chaos” matters

Chaos engineering transformed how software teams build resilient systems by introducing controlled failures to uncover hidden risks. The same idea now applies to AI. Models can behave inconsistently, “hallucinate,” or show unexpected bias when facing new inputs, adversarial prompts, or compounding tool calls. Without stress, you miss those risks; with unstructured stress, you risk harming users or data.

Safe chaos means you deliberately generate, manage, and observe the “mess”—but do it in a contained, ethical, and repeatable way. The outcome is less guesswork and more confidence in your AI’s behavior under pressure.

WAY Labs features

WAY Labs publicly positions itself around the idea of “safe chaos for AI.” While specific product modules and pricing can evolve, the capabilities you should expect from a platform with this focus include the following. Always confirm the final feature set and scope with the vendor, since offerings change and can be tailored to your use case.

- Scenario and stress testing

- Generate diverse, challenging test prompts and tasks to expose blind spots.

- Run stress tests that simulate noisy, ambiguous, or adversarial inputs.

- Test across multiple models, versions, and parameters to compare robustness.

- Evaluation and scoring

- Define quality, safety, and reliability criteria that match your product needs.

- Score outputs for correctness, harmful content, policy compliance, or hallucination risk.

- Track regressions between model and app releases with dashboards and trends.

- Guardrails and policy checks

- Apply rules that catch sensitive content, unsafe tool use, or data leaks before responses reach users.

- Enforce business and regulatory policies consistently across prompts and tasks.

- Red teaming support

- Enable structured adversarial testing to probe misuse and jailbreak vectors.

- Manage red team tasks, triage findings, and prioritize fixes.

- Incident capture and replay

- Record problematic interactions from production and replay them in a safe testbed.

- Bundle edge cases into regression suites to prevent repeat failures.

- CI/CD and workflow integration

- Automate test runs in pre-release pipelines and block deployments on critical failures.

- Trigger evaluations during data, prompt, or model updates to keep quality steady.

- Reporting and audit trails

- Generate evidence you can share with leadership, customers, and auditors.

- Show coverage metrics, pass/fail rates, and mitigations tied to specific risks.

- Data privacy and safety posture

- Define what data can be used in testing and how it’s handled or anonymized.

- Limit access and ensure safe use of sensitive examples during chaos simulations.

- Human-in-the-loop review

- Collect expert judgments on tricky outputs and align scoring with domain standards.

- Blend automated metrics with human ratings for a fuller risk picture.

- Custom test suites

- Tailor scenarios to your domain: finance, health, legal, customer support, and more.

- Build evolving libraries of tests so your evaluations improve as your app matures.

Benefits you can expect

- Fewer production surprises because you confront edge cases earlier.

- Clearer trade-offs between speed and safety, with data to guide decisions.

- Faster iteration cycles thanks to repeatable, automated evaluations.

- Greater trust with stakeholders who want evidence, not anecdotes.

- Better user experiences through more reliable and consistent model behavior.

Potential limitations and questions to ask

As with any platform in a fast-moving space, it’s wise to validate fit before committing. Here are practical questions your team should raise:

- Coverage: Which domains and risk types are best supported today? What must you build yourself?

- Integrations: Does it plug into your stack (model providers, vector stores, orchestration, CI/CD)?

- Metrics: Can you define your own success and safety metrics? How easy is it to calibrate them?

- Scale: How does performance and cost behave as scenarios, users, and models increase?

- Privacy: What data leaves your environment? Is self-hosting or VPC deployment an option?

- Human review: Can subject-matter experts label or adjudicate tricky cases inside the tool?

- Governance: Are there audit logs, versioning, and role-based access for regulated teams?

- Support: What help do you get during onboarding and during incidents?

- Roadmap: How quickly does the vendor ship improvements that you’ll depend on?

Pricing

Pricing for platforms in this category often depends on factors like usage volume, number of seats, deployment model, and support tier. Because packaging and pricing can change, it’s best to check the latest details directly with WAY Labs via their website or sales team. If you’re budgeting, assume there may be a free trial or pilot period, with paid tiers for teams and enterprises that need higher volume, more integrations, and dedicated support. When you evaluate cost, include not only license or platform fees but also the value of time saved in engineering cycles and the avoided costs from incidents or reputational harm.

How to evaluate WAY Labs (or any “safe chaos” platform) in 30 days

If you want a fast, fair assessment, try this simple plan:

- Define success upfront

- Pick 3–5 failure modes that worry you most: hallucinations, unsafe outputs, fragile tool calls, data leakage, or poor instructions handling.

- Choose 2–3 real user journeys where these failures would hurt the most.

- Assemble a minimal testbed

- Connect your current model/app setup, prompts, and tools.

- Import or craft a small but diverse test set including known edge cases.

- Run baseline chaos and evaluations

- Execute scenario generation and red teaming to expose blind spots.

- Score outputs using objective and human-in-the-loop metrics.

- Close the loop

- Fix obvious issues (prompt updates, guardrail rules, augmented retrieval) and re-run tests.

- Track regression improvements to prove ROI.

- Decide on rollout

- If you observe measurable risk reduction and faster iteration, expand to more teams and journeys.

- Codify a lightweight policy: no releases without passing critical safety gates.

Security, privacy, and governance considerations

“Safe chaos” is only safe if your data and users are protected. As you explore WAY Labs or alternatives, verify:

- Data handling: What data is collected, stored, or shared? For how long, and where?

- Deployment model: SaaS, private cloud/VPC, or on-prem options for sensitive workloads.

- Access control: SSO/SAML, RBAC, audit logging, and environment isolation.

- Compliance posture: Alignment with standards your org needs (for example, SOC 2, ISO 27001).

- Red team safeguards: Guardrails to prevent harmful testing from leaking outside sandboxes.

WAY Labs top competitors

If you’re comparing the market, here are notable alternatives and adjacent tools you might evaluate alongside WAY Labs. Each takes a different angle on evaluation, safety, and robustness. Review their latest documentation for specifics, as offerings evolve quickly.

- Robust Intelligence

- Focus on AI risk testing and continuous validation across models and data.

- Often used by enterprises to harden models pre- and post-deployment.

- Lakera

- Emphasis on prompt injection defense, content safety, and security for LLM apps.

- Useful if your main risk is untrusted inputs and jailbreak attempts.

- LangSmith (from LangChain)

- Tools for LLM application tracing, evaluation, datasets, and testing workflows.

- Popular with teams building agentic apps and complex chains.

- OpenAI eval tooling and model-specific evals

- Lightweight frameworks for defining tasks and judging model performance.

- Best for teams comfortable building more custom pipelines around core evals.

- NVIDIA NeMo Guardrails and Guardrails AI

- Frameworks for structuring safe responses and policy-compliant outputs.

- Useful for prompt-level constraints and response shaping.

- Deepchecks

- Testing and monitoring for ML systems, with growing LLM support.

- Good fit if you prefer open or extensible testing components.

- Weights & Biases (W&B) Evals/Weave-style tooling

- Experiment tracking, evaluations, and observability for LLM apps.

- Ideal if you already use W&B for model development.

- Scale AI Safety and red teaming services

- Human-in-the-loop testing, adversarial evaluations, and expert audits.

- Helpful when you need managed services plus tooling.

- Humanloop

- LLM evaluations, feedback loops, and iteration workflows.

- Often chosen for quick setup and developer-friendly UX.

- Cloud vendor guardrails

- Azure AI Content Safety, AWS Bedrock Guardrails, and Google Vertex AI safety tooling.

- Convenient if you want native guardrails inside your existing cloud stack.

How WAY Labs compares conceptually

Compared with many testing tools that only score static benchmarks or content safety, a “safe chaos” approach pushes beyond simple filters and one-off checks. It emphasizes dynamic scenario generation, red teaming, and continuous regression control. If your team has moved past “does it answer?” to “does it behave?” WAY Labs’ philosophy may align well with your needs.

That said, some alternatives may be a better fit if you need a narrower slice of the problem (for example, prompt injection defense only) or if you prefer building custom evaluation pipelines in-house and only need libraries and glue code. Your choice depends on whether you want an all-in-one testing environment or composable pieces you maintain yourself.

Real-world use cases

- Customer support copilots

- Reduce hallucinated instructions, unsafe escalation, and privacy leaks.

- Test behavior across languages, tones, and frustrated user prompts.

- Agentic workflows and tool use

- Stress-test tool invocation under ambiguous instructions and unexpected outputs.

- Catch loops, runaway actions, and mis-ordered tool sequences.

- RAG and knowledge assistants

- Evaluate retrieval quality and citation integrity when documents are noisy or missing.

- Probe overconfidence when sources conflict or are outdated.

- Regulated domains (finance, health, legal)

- Measure adherence to policy constraints and risk limits through edge-case prompts.

- Create auditable test suites to satisfy internal and external oversight.

- Content generation

- Identify bias, toxicity, and misleading content under creative constraints.

- Benchmark style, factuality, and brand alignment across model updates.

Measuring ROI

It’s easy to underestimate the cost of brittle AI behavior. To quantify value, track:

- Time saved: Fewer manual spot checks; automated regressions in CI/CD.

- Incident reduction: Declines in escalations, policy breaches, or harmful outputs.

- Iteration speed: Faster prompt/model changes with confidence from test passes.

- User outcomes: Improved CSAT, retention, or task completion where AI assists.

- Compliance: Evidence that accelerates approvals and reduces audit friction.

Common pitfalls to avoid

- Overfitting to benchmarks: If your tests don’t match your real users, you’ll miss failures.

- Ignoring human feedback: Automated metrics are necessary but incomplete without expert review.

- One-time testing: AI behavior drifts; treat evaluations as continuous, not annual.

- Loose governance: Without versioning and approvals, you’ll ship regressions by accident.

- Unclear ownership: Assign someone accountable for evaluations and risk sign-off.

Buyer’s checklist

- Does the platform help generate realistic, diverse stress scenarios quickly?

- Can we define, tune, and trust the evaluation metrics for our domain?

- Is there a simple path to integrate with our stack and CI/CD?

- Do we get clear reporting for leaders, auditors, and customers?

- What are the deployment options and data handling guarantees?

- How does the cost scale with our usage and number of teams?

- Is there strong support and documentation for onboarding?

- Can we start small, prove value, and expand without rework?

Getting started with WAY Labs

If “safe chaos for AI” aligns with your goals, the next step is to request a demo or pilot. Bring 10–20 of your thorniest examples, plus a few real production incidents, and ask the team to show how their platform surfaces, scores, and helps you mitigate those issues. Evaluate how quickly you can plug it into your environment and how much you’d rely on the vendor for custom work versus in-house configuration.

Above all, aim for a short, high-signal pilot. If the platform helps you find and fix problems you didn’t already know about—and do it fast—you’re on the right track.

Wrapping up

WAY Labs positions itself around a compelling idea: give your AI “safe chaos” so you can see how it behaves when the world gets weird. That mindset is exactly what modern AI teams need. As models become more capable and more deeply embedded in core workflows, the cost of silent failure only rises. Structured stress tests, robust evaluations, and disciplined guardrails are how you make progress without gambling on user trust.

If you want an end-to-end environment to probe edge cases, measure safety and quality, and prevent regressions before release, WAY Labs is worth a close look. If you prefer modular components or narrowly focused defenses, several strong alternatives can fit your needs as well. Either way, build a practice around continuous evaluations, human-in-the-loop review, and clear governance. Your users—and your future self—will thank you.

To learn more or to request an introduction, visit waylabs.org.